Memory Driven Computing

Next generation sequencing (NGS) and its manifold applications are transforming the life and medical sciences as reflected by an exponential 860-fold increase in published data sets during the last 10 years. Considering only human genome sequencing, the increase in genomic data over the next decade has been estimated to reach more than three Exabyte (3x1018 byte). NGS data are projected to become on par either with data collections such as in astronomy or YouTube or the most demanding in terms of data acquisition, storage, distribution, and analysis. To cope with this data avalanche, so far, compute power is simply increased by building larger high-performance computing (HPC) clusters (more processors/cores), and by scaling out and centralizing data storage (cloud solutions). While currently still legitimate, it can already be foreseen that this approach is not sustainable.

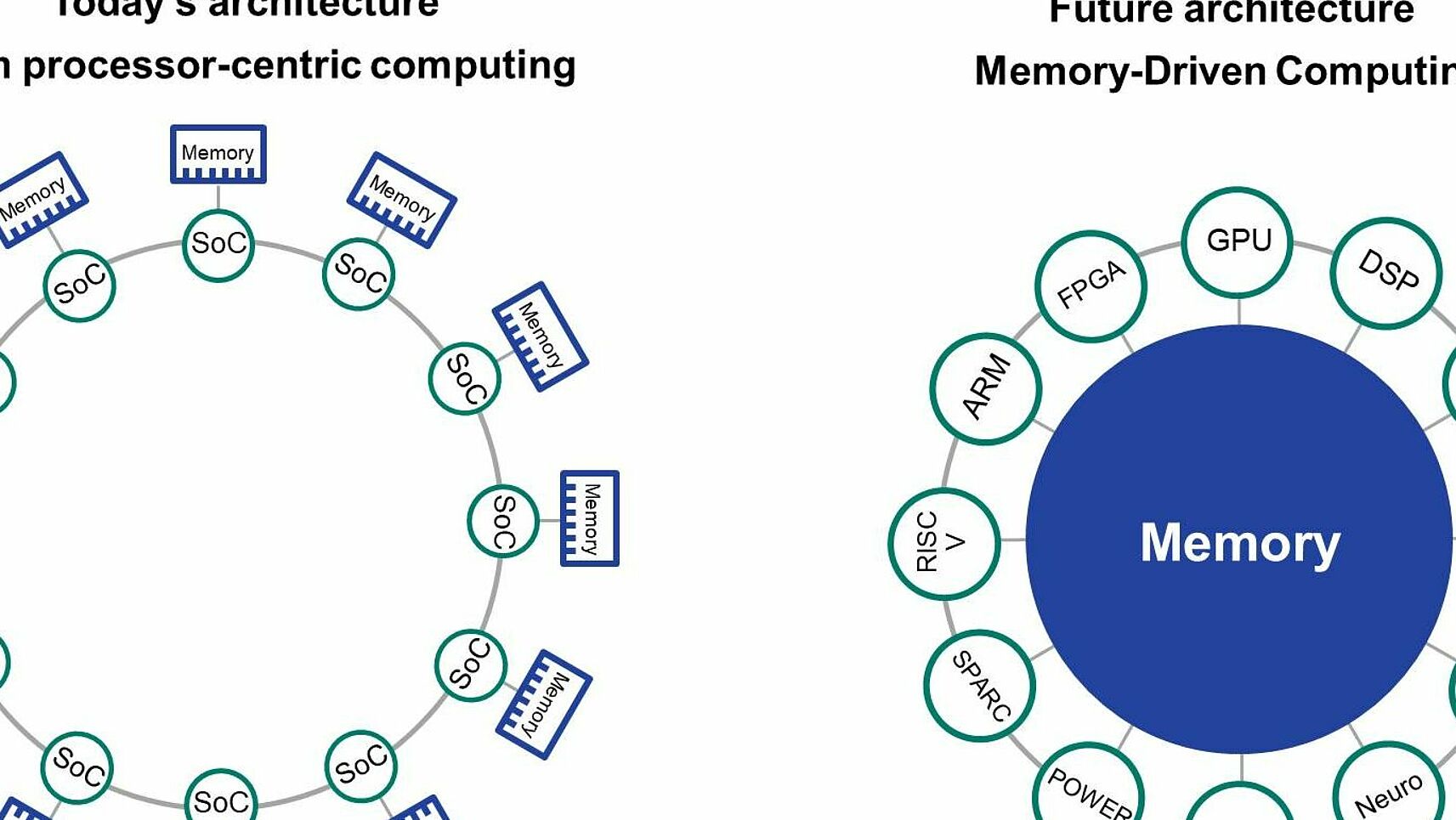

Traditionally, computers are based on the von Neumann architecture. Scaling such systems is achieved by adding more resources, like in clusters or super computers. Scaling is limited by the end of Moore’s law. One of the approaches to overcome this, is memory-driven computing where a pool of devices is connected into a single environment using the optical Gen-Z fabric. Memory-driven computing is a paradigm shift, that puts memory at the center of the compute infrastructure to support todays data-driven applications. System components are connected through a fabric, Gen-Z. With Gen-Z, up to 4096 Yottabytes of memory can be addressed among up to 16 million devices. It is possible to connect the computational power of 1600 exascale computers.

Präsentationen

- Hartmut Schultze, Launch event: Artificial Intelligence: A European perspective, 5.12.2018, Brussels, Belgium, https://ec.europa.eu/jrc/en/event/conference/launch-event-jrc-report-ai-watch

- Joachim Schultze, HPE Discover 2018, 27.11.2018, Madrid, Executive interview

- Matthias Becker, Hartmut Schultze, HPE Discover 2018, 27.11.2018 - 29.11.2018, Madrid, exhibit and Demo

- Hartmut Schultze, ITAPA 2018, 13.11.2018, Bratislava, Slowakei, https://www.itapa.sk/8104-en/medzinarodny-kongres-itapa-2018

- Joachim L. Schultze; Quo vadis: Künstliche Intelligenz in Medizin und Lebenswissenschaften; Petersberger Gespräche; 109.2018; Bonn

- Matthias Becker; Accelerating genomic data processing with Memory Driven Computing; Institute for Genomic Statistics and Bioinformatics, University of Bonn; 30.10.2018; Bonn

- Joachim L. Schultze; Memory-Driven Computing für die Alzheimer Forschung, Besuch des MdB Steier am DZNE; 22.08.2018; Bonn

- Matthias Becker, Joachim L. Schultze; "Memory Driven Computing and Genomics; Max Planck Institut für Radioastronomie; 7.02018; Effelsberg

- Joachim L. Schultze; CEBIT 2018; Bundesministerin Karliczek besucht das DZNE auf der CEBIT, 13.06.2018; Hannover; https://www.dzne.de/im-fokus/meldungen/2018/bundesministerin-karliczek-besucht-das-dzne-auf-der-cebit/

- Joachim L. Schultze, Hartmut Schultze; CEBIT 2018 d!talk; 13.06.2018; Hannover; https://www.youtube.com/watch?v=Fl1ByJ5s7GU

- Joachim L. Schultze; Die zweite genomische Revolution, Music & Brain; 05.06.2018; Bonn

- Hartmut Schultze; Workshop ‘Memory-driven Computing for Big Data Analytics’; 30.05.2018; Univ. of Applied Sciences (HTW) Berlin; http://bigdata.htw-berlin.de/18/workshop_en.pdfhttp://bigdata.htw-berlin.de/18/Memorandum_BigDataAnalytics.pdf

- Joachim L. Schultze, Begutachtung Programm-orientierte Forschung der Helmholtz-Gemeinschaft, PRECISE Platform for Single Cell Genomics and Epigenomics including Memory Driven Computing; 11.04.2018; Bonn

- Joachim L. Schultze; Bitkom Events; Big-Data Summit 2018; Vision for a Radical New Kind of Compute Architecture in the Age of Big Data; 28.02.2018; Hanau; https://big-data.ai/computing-vision-radical-new-kind-compute-architecture-age-big-data, https://www.youtube.com/watch?v=s5lZ7z7EXP0

- Matthias Becker; Big Data und Gendatenprozessierung mit der "Machine"; Java User Group; 16.11.2017; Hannover

- Matthias Becker; theCUBE; The Machine HPE Discover Madrid 2017; 29.11.2017; Madrid; https://www.youtube.com/watch?v=c-vqsQPbZuk

- Joachim L. Schultze, Matthias Becker; The Machine project and Memory-Driven Computing: Powering tomorrow’s AI-driven enterprise; HPE Discover 2017; 29.11.2017; Madrid; https://www.youtube.com/watch?v=IfzQ9iVSod0https://www.youtube.com/watch?v=ohPmvkFRukg

- Joachim L. Schultze, Pierluigi Nicotera; General Session; HPE Discover 2017; 05.06.2017; Las Vegas, https://www.youtube.com/watch?v=zIc-i_BdH18&t=7843

Webinars

- Hartmut Schultze, Matthias Becker and Joachim L. Schultze, DZNE and HPE: Harnessing Memory-Driven Computing to fight Alzheimer’s, Machine User Group, 17.10.2017, https://community.hpe.com/t5/Behind-the-scenes-Labs/WATCH-the-webinar-DZNE-and-HPE-Harnessing-Memory-Driven/ba-p/6980415#.W9yXUpNKj-g

Helmholtz News

- 17.08.2017: Alzheimer’s Research Using Big Data Against Memory Loss; https://www.helmholtz.de/gesundheit/mit_big_data_gegen_gedaechtnisschwund/

Videos

- 01.10.2018: Closer to a Cure: Combating Alzheimer's With New Compute Technology; Great Big Story; https://www.greatbigstory.com/stories/closer-to-a-cure-combatting-alzheimer-s-with-new-compute-technology

- 02.10.2018; Accelerating Alzheimer’s Research; HPE & DZNE customer case-study; https://www.hpe.com/us/en/customer-case-studies/dzne-memory-driven.html

- 13.06.2018; CEBIT2018 D!Talk HPE & DZNE on Memory Driven Computing; CEBIT Youtube channel; https://www.youtube.com/watch?v=Fl1ByJ5s7

Pressemitteilungen und Referenzen in der Presse

- 01.10.2018: DZNE & HPE Memory Driven Computing Whitepaper; HIMSS Europe; https://www.himss.eu/sites/himsseu/files/education/whitepapers/DZNE Alzheimer.pdf

- 19.09.2018: Neue Computerarchitektur soll Demenzforschung beschleunigen; Demenz aktuell, https://www.demenz-aktuell.de/neue-computerarchitektur-soll-demenzforschung-beschleunigen.html

- 16.08.2018: MEMORY-DRIVEN COMPUTING, Rechnen inmitten der Datenmassen; VDI Nachrichten; https://www.vdi-nachrichten.com/Fokus/Rechnen-inmitten-Datenmassen

- 13.06.2018: Bundesforschungsministerin Anja Karliczek im Gespräch mit Prof. Joachim Schultze, Genomforscher am DZNE; https://www.facebook.com/dzne.de/photos/a.840211549362696/1865779643472543/

- 11.06.2018: Die dunkle Seite der Cebit; Deutsche Welle: https://www.dw.com/de/die-dunkle-seite-der-cebit/a-44165086

- 06.04.2018: Memory-Driven Computing - Demenzforschung auf der Überholspur; eGovernment Computing; https://www.egovernment-computing.de/demenzforschung-auf-der-ueberholspur-a-702321/

- 29.3.2018: New Computer Architecture: Time Lapse for Dementia Research; Innovations Report; https://www.innovations-report.com/html/reports/medical-technology/new-computer-architecture-time-lapse-for-dementia-research.html

- 28.03.2018: Neue Computer-Architektur: Zeitraffer für die Demenz-Forschung; Compamed; https://www.compamed.de/cgi-bin/md_compamed/lib/pub/tt.cgi/Neue_Computer-Architektur_Zeitraffer_f%C3%BCr_die_Demenz-Forschung.html?oid=58244&lang=1&ticket=g_u_e_s_t

- 18.09.2017: Interview mit Prof. Dr. Joachim Schultze; Die Debatte; https://www.die-debatte.org/alzheimer-interview-schultze/

- 23.03.2017: HPE “The Machine” – speicherorientierte Architektur als Hoffnungsträger; Silicon; https://www.silicon.de/41642709/hpe-the-machine-speicherorientierte-architektur-als-hoffnungstraeger

- 20.03.2017: HPE: „The Machine“ soll Alzheimer bekämpfen, ZDNet, CEBIT-Special, https://www.zdnet.de/88290313/hpe-the-machine-soll-alzheimer-bekaempfen/

- 25.07.2018: HPE und das DZNE kooperieren bei der Demenzforschung; ZDNet; https://www.zdnet.de/88338471/hpe-und-das-dzne-kooperieren-bei-der-demenzforschung/

Zurück zur AG Schultze